About

The Cornell Phonetics Lab is a group of students and faculty who are curious about speech. We study patterns in speech — in both movement and sound. We do a variety research — experiments, fieldwork, and corpus studies. We test theories and build models of the mechanisms that create patterns. Learn more about our Research. See below for information on our events and our facilities.

Upcoming Events

25th March 2021 04:30 PM

Linguistics Colloquium Speaker: Dr. Robert Ladd of Edinburg University

The Department of Linguistics proudly presents Professor Emeritus Robert Ladd from the University of Edinburgh. Dr. Ladd is a Cornell and Linguistic Department alumnus (MA Linguistics 1972; PhD Linguistics 1978).

Dr. Ladd with give a talk titled: "It's prominence, but not as we know it" (Adventures in the phonology and phonetics of stress)

Abstract:

A simple notion of “prominence” has taken centre-stage in recent phonetic work on how prosodic features are used to signal pragmatic and syntactic properties. A good deal of current experimental work attempts to identify the range of phonetic and other features that distinguish words judged “prominent” from other words.

But assumptions about the relation between phonetic prominence and syntactic/pragmatic importance (focus, informativeness, etc.) - including the assumption that a language will exhibit such a relation - are Eurocentric and now fairly uncontroversially wrong. More importantly, prominence is ill-defined, and little attempt is made to relate the recent findings of phonetic investigation to theoretical ideas about “stress”.

If we think about stress in terms of abstract strength relations in a hierarchical structure - i.e. in terms inspired by Liberman’s original proposal for metrical phonology and his notion of lawful “tune-text association” - we can to go beyond trying to account phonetically for impressionistic “prominence” and can recognize genuine typological differences in the way languages use suprasegmental phonetic resources.

Location:

26th March 2021 09:55 AM

Dr. Robert Hawkins will talk on "Coordinating on meaning in communication"

This week in Computational Psycholinguistics Discussions (C.Psyd), we're excited to host invited speaker Dr. Robert Hawkins of Princeton. Talk details below.

Title: Coordinating on meaning in communication

Abstract: Languages are powerful solutions to coordination problems: they provide stable, shared expectations about how the words we say correspond to the beliefs and intentions in our heads. However, in an non-stationary environment with new things to talk about and new partners to talk with, linguistic knowledge must be flexible: old words acquire new ad hoc or partner-specific meanings on the fly.

In this talk, I'll share some recent work investigating the cognitive mechanisms that support this balance between stability and flexibility in human communication, which motivates the development of more adaptive, interactive language models in NLP.

First, I'll introduce a computational framework re-casting communication as a hierarchical meta-learning problem: community-level conventions and norms provide stable priors for communication, while rapid learning within each interaction allows for partner- and context-specific common ground. I'll evaluate this model using a new corpus of natural-language communication in a communication task where participants are grouped in small communities and take turns referring to ambiguous tangram objects and describe how we scaled up this framework to neural architectures that can be deployed in real-time interactions with human partners.

Taken together, this line of work aims to build a computational foundation for a more dynamic and socially-aware view of linguistic meaning in communication.

Bio: Robert Hawkins is currently doing his post-doc at Princeton. He spent his undergraduate years at Indiana University and did his PhD at Stanford. Broadly, Robert works at the intersection of cognitive science and machine learning and is interested in the cognitive mechanisms that allow people to flexibly coordinate and collaborate with one another, particularly those that allow social conventions and norms to emerge.

Location:

Facilities

The Cornell Phonetics Laboratory (CPL) provides an integrated environment for the experimental study of speech and language, including its production, perception, and acquisition.

Located in Morrill Hall, the laboratory consists of six adjacent rooms and covers about 1,600 square feet. Its facilities include a variety of hardware and software for analyzing and editing speech, for running experiments, for synthesizing speech, and for developing and testing phonetic, phonological, and psycholinguistic models.

Web-Based Phonetics and Phonology Experiments with LabVanced

The Phonetics Lab licenses the LabVanced software for designing and conducting web-based experiments.

Labvanced has particular value for phonetics and phonology experiments because of its:

- *Flexible audio/video recording capabilities and online eye-tracking.

- *Presentation of any kind of stimuli, including audio and video

- *Highly accurate response time measurement

- *Researchers can interactively build experiments with LabVanced's graphical task builder, without having to write any code.

Students and Faculty are currently using LabVanced to design web experiments involving eye-tracking, audio recording, and perception studies.

Subjects are recruited via several online systems:

- * Prolific and Amazon Mechanical Turk - subjects for web-based experiments.

- * Sona Systems - Cornell subjects for for LabVanced experiments conducted in the Phonetics Lab's Sound Booth

Computing Resources

The Phonetics Lab maintains two Linux servers that are located in the Rhodes Hall server farm:

- Lingual - This Ubuntu Linux web server hosts the Phonetics Lab Drupal websites, along with a number of event and faculty/grad student HTML/CSS websites.

- Uvular - This Ubuntu Linux dual-processor, 24-core, two GPU server is the computational workhorse for the Phonetics lab, and is primarily used for deep-learning projects.

In addition to the Phonetics Lab servers, students can request access to additional computing resources of the Computational Linguistics lab:

- *Badjak - a Linux GPU-based compute server with eight NVIDIA GeForce RTX 2080Ti GPUs

- *Compute server #2 - a Linux GPU-based compute server with eight NVIDIA A5000 GPUs

- *Oelek - a Linux NFS storage server that supports Badjak.

These servers, in turn, are nodes in the G2 Computing Cluster, which currently consists of 195 servers (82 CPU-only servers and 113 GPU servers) consisting of ~7400 CPU cores and 698 GPUs.

The G2 Cluster uses the SLURM Workload Manager for submitting batch jobs that can run on any available server or GPU on any cluster node.

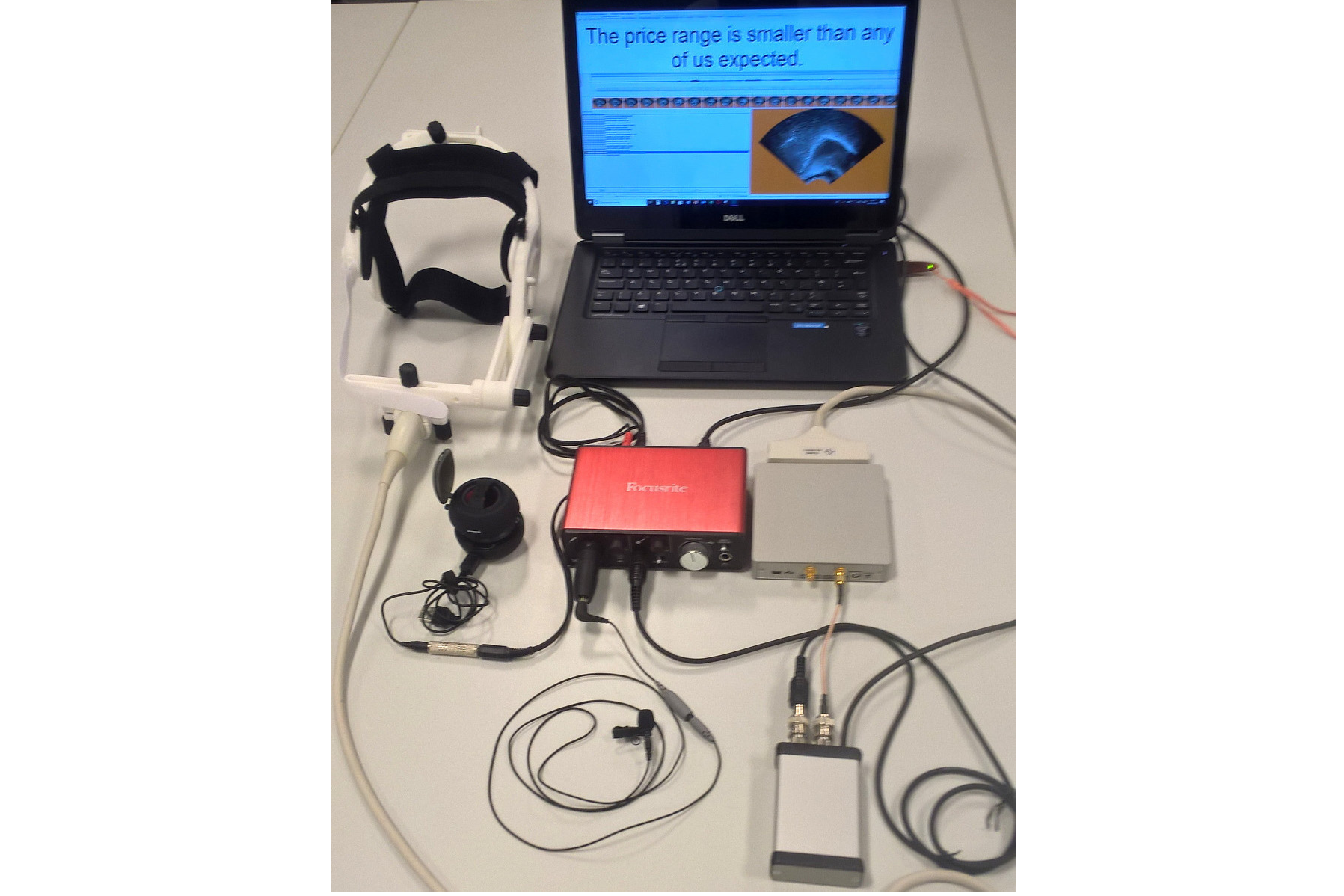

Articulate Instruments - Micro Speech Research Ultrasound System

We use this Articulate Instruments Micro Speech Research Ultrasound System to investigate how fine-grained variation in speech articulation connects to phonological structure.

The ultrasound system is portable and non-invasive, making it ideal for collecting articulatory data in the field.

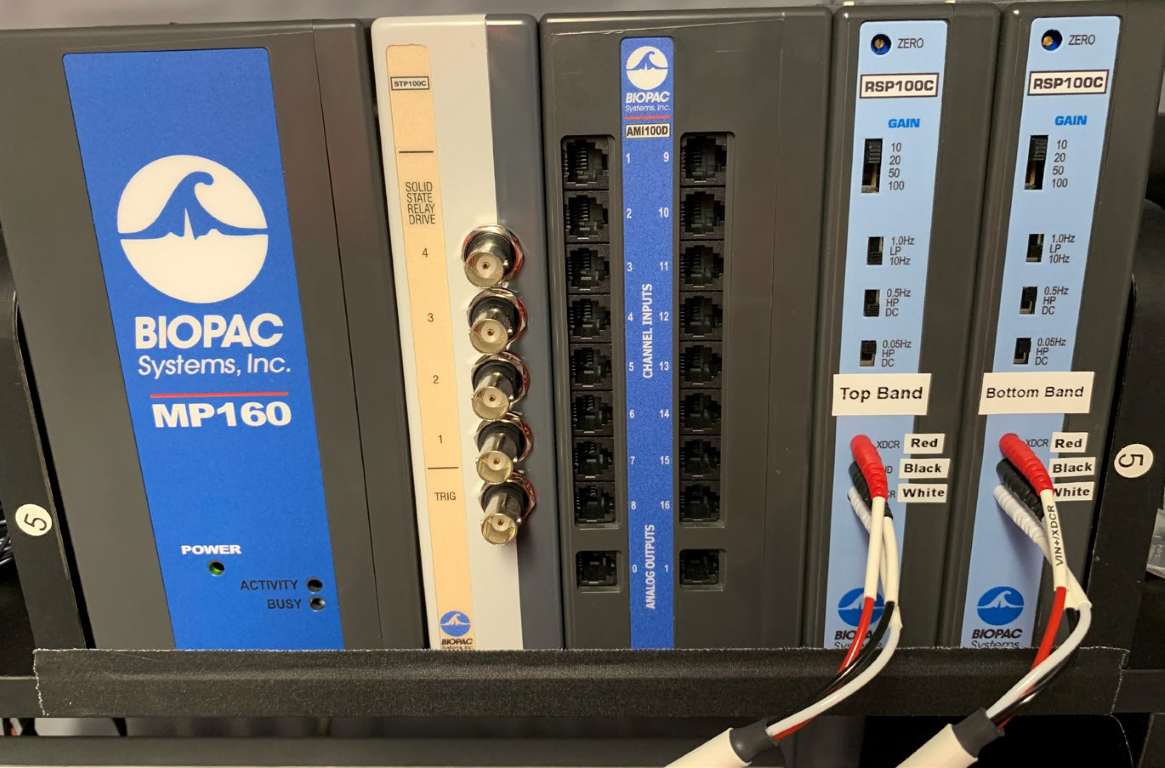

BIOPAC MP-160 System

The Sound Booth Laboratory has a BIOPAC MP-160 system for physiological data collection. This system supports two BIOPAC Respiratory Effort Transducers and their associated interface modules.

Language Corpora

- The Cornell Linguistics Department has more than 915 language corpora from the Linguistic Data Consortium (LDC), consisting of high-quality text, audio, and video corpora in more than 60 languages. In addition, we receive three to four new language corpora per month under an LDC license maintained by the Cornell Library.

- This Linguistic Department web page lists all our holdings, as well as our licensed non-LDC corpora.

- These and other corpora are available to Cornell students, staff, faculty, post-docs, and visiting scholars for research in the broad area of "natural language processing", which of course includes all ongoing Phonetics Lab research activities.

- This Confluence wiki page - only available to Cornell faculty & students - outlines the corpora access procedures for faculty supervised research.

Speech Aerodynamics

Studies of the aerodynamics of speech production are conducted with our Glottal Enterprises oral and nasal airflow and pressure transducers.

Electroglottography

We use a Glottal Enterprises EG-2 electroglottograph for noninvasive measurement of vocal fold vibration.

Real-time vocal tract MRI

Our lab is part of the Cornell Speech Imaging Group (SIG), a cross-disciplinary team of researchers using real-time magnetic resonance imaging to study the dynamics of speech articulation.

Articulatory movement tracking

We use the Northern Digital Inc. Wave motion-capture system to study speech articulatory patterns and motor control.

Sound Booth

Our isolated sound recording booth serves a range of purposes--from basic recording to perceptual, psycholinguistic, and ultrasonic experimentation.

We also have the necessary software and audio interfaces to perform low latency real-time auditory feedback experiments via MATLAB and Audapter.